Synthetic Evolution

A JUMP//CUT case study: A Breakthrough in AI Character Casting

The following is an addendum to the April 16th, 2026 keynote speech at United Talent Agency for the member of the MovieLabs Industry Forum.

How do you cast a character in a film with no actors?

Let’s start with how you might go about creating a character for an AI film.

Imagine we are trying to cast Alexei Volkov and he's the main antagonist in the Television Series Shepard’s Tone that we are developing - The Wolf. A Soviet physicist shaped by a childhood and is now leading a secret experiment at Chernobyl that could unhinge the fabric of existence . A man who carries the quiet weight of inevitability. He does not fight what is coming. He understands it, accepts it, and continues forward without illusion.

So how would you do it? Here’s the Frustration Ladder that we climbed.

Frustration Level 1: You might take that description and drop it into Nano Banana => But you’ll quickly see it triggers that AI Slop response that we are now all developing.

Frustration Level 2: You might paste it into ChatGPT and ask it to write the perfect prompt => better but still AI Slop response.

Frustration Level 3: You might build out a whole character bible, put that entire character bible into ChatGPT, then ask it to generate a prompt in JSON format, make custom adjustments in the JSON and keep going until something looks right. => still your mind says something is off.

Frustration Level 4: Then you might add ingredient images of actors you think are close. Nano Banana tries to block you because these are real people - but you get a couple through. And now you realize your going to be sued.

Like many folks trying to figure out a way of how to get our AI Slop hell, we went through all the machinations trying to figure out how to get to the quality were were seeking.

AI seems to be perpetually lost in the loop of “It’s nice to see the elephant dance, but I wouldn’t take it to the ballet.”

And this is where almost everyone stops and gives up or gives in to slop.

Like many, we tried, kept failing, and almost went crazy.

And just when we were convinced AI slop was unavoidable

We finally hit pay dirt by looking at the problem in a completely different way.

We realized, getting this right isn’t about a good prompt, or iterating until you are blind

- it’s about teaching AI something new - evolution.

In other words, we science-d the shit out of it.

Synthetic Evolution

This is what the next generation of AI Character Casting looks like - and it’s crazy, so hold on to your nerd hats.

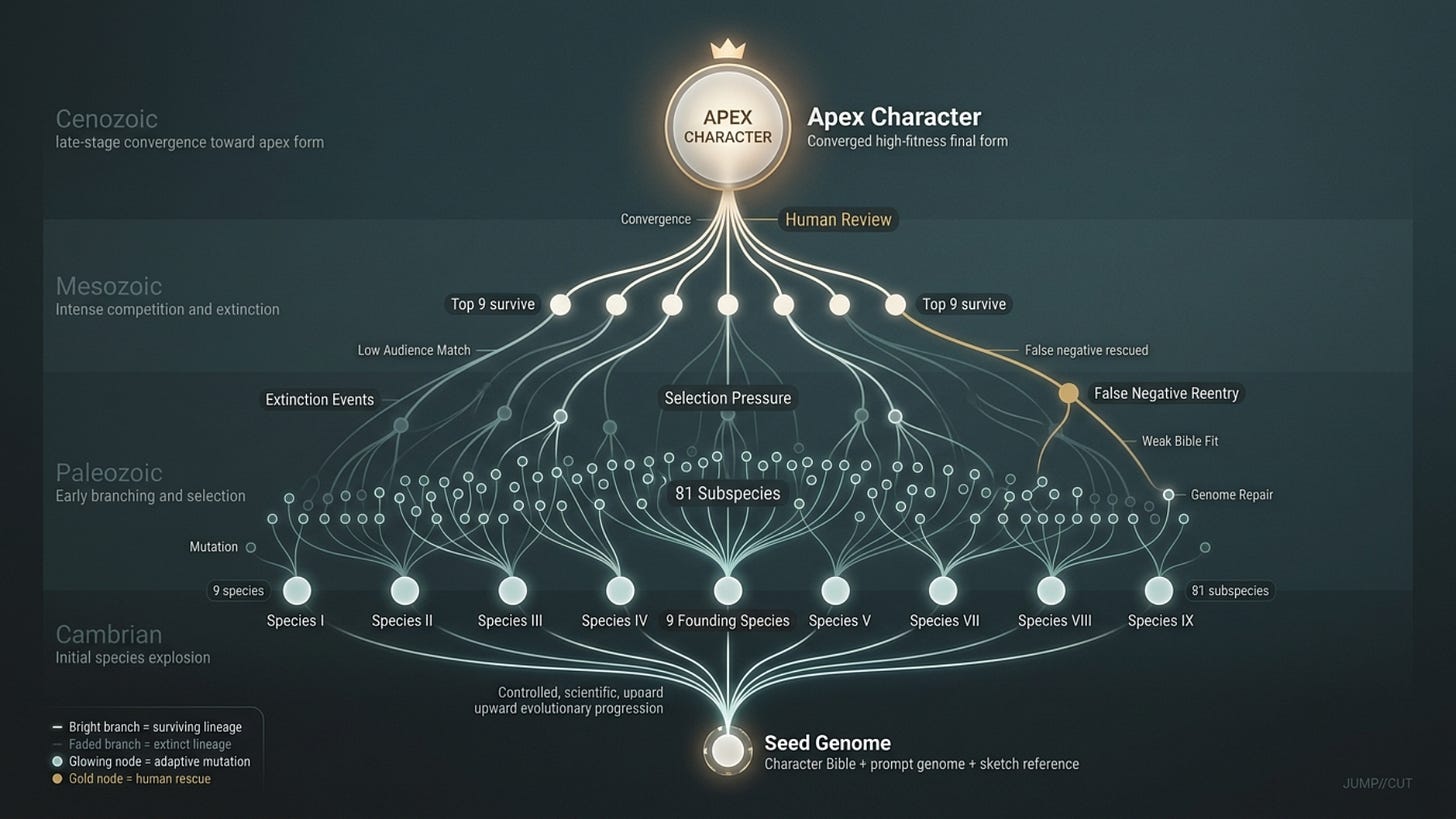

We call this process Synthetic Evolution: a closed-loop, self-referencing agentic casting methodology in which a character, or any other component in a film, is not simply designed once or even 50 times, but rather iteratively evolved through many tens of thousands of loops of generation, selection pressure, mutation, extinction, and survival until the right form emerges.

Instead of treating casting in a traditional sense, or even prompting in a traditional sense, Synthetic Evolution treats it like evolution itself.

In nature, evolution begins with a simple seed “a genetic starting point” from which variation emerges through random mutation and recombination. These variations are not guided; they simply occur, and the environment applies pressure. Organisms that fit their environment survive and reproduce, while those that don’t go extinct. Over many generations, this loop of variation, selection, and survival gradually produces organisms that appear almost perfectly adapted, what we might call an apex form.

We realized we could model character creation in the same way.

It is a structured search problem across a fitness landscape with tens of thousands of iterations that can branch, advance, thrive, or go extinct - all in a world defined by primary evolutionary forces.

In our case, we chose art as our god. These are what we prioritized.

Matching the author’s intent and vision for the character

Matching vision of the team of artists leading the project ( e.g. the combination of the writer, director, cinematographer, editor, set designer, wardrobe, etc )

Matching the expectations of the intended audience

The end result is that we can use synthetic evolution to create character that is the closest approximation to the writer’s and a team of artists’ intent while measured against audience reaction and expectations.

Note: JUMP//CUT supports the MovieLabs initiative to create standards for film and television production and in doing so we have mad a commitment that our Synthetic Evolution complies to the MovieLabs 2030 Vision and it’s 10 Core Principles.

Step 0 — INTENT

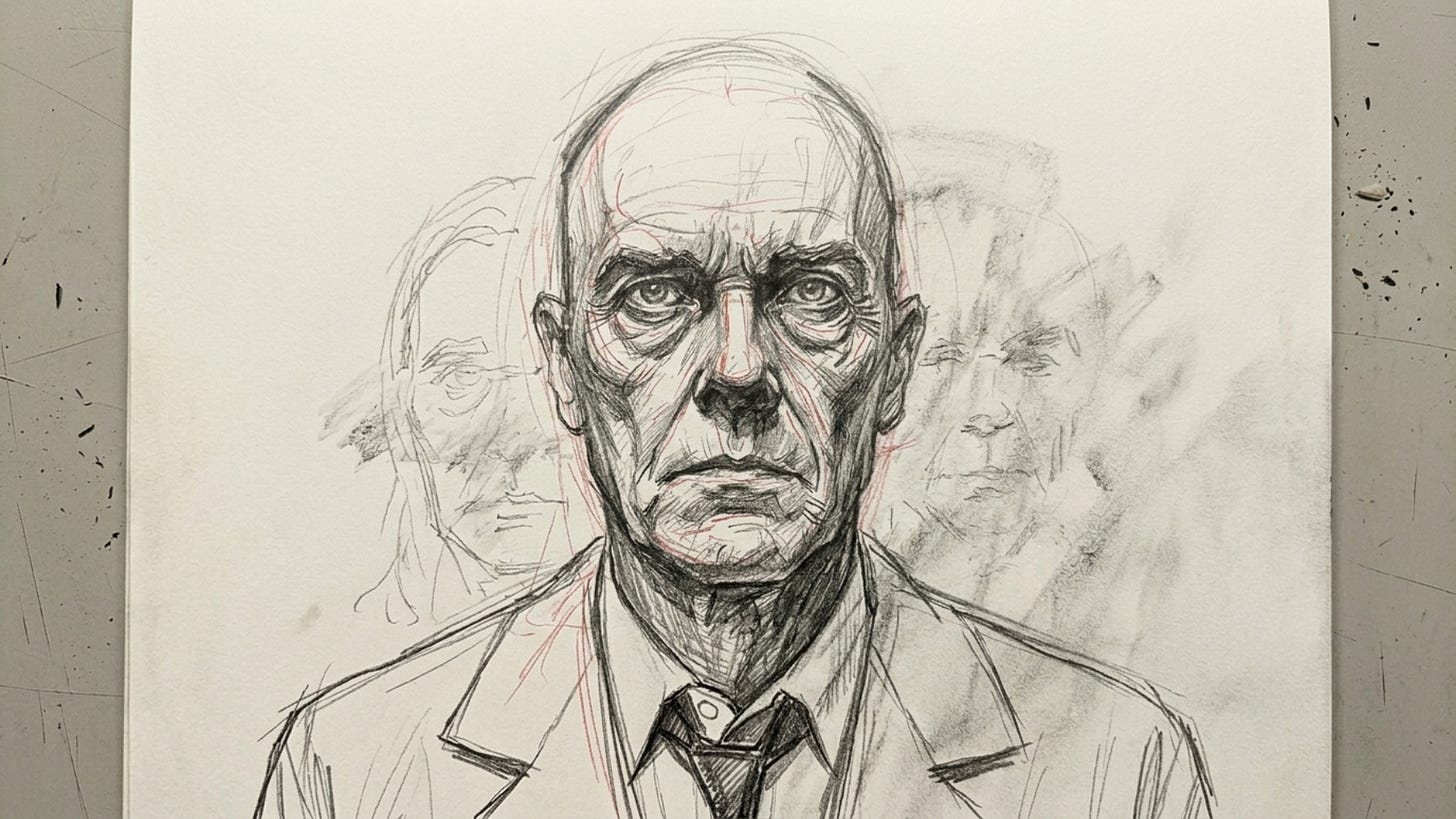

The entire thing starts with Intent - that is, a conversation with the writer with the intent to start the casting, or rather in our case, the creation of the Character with the Character’s God - the writer. And we do this in kind of a funny way - we bring in a sketch artist as if we are trying to identify a perp that committed a crime. To suss out a sometimes fuzzy, sometimes clear beginnings of a what the character looks like. Many times, it’s not an exact image, but perhaps fuzzy variants - all of which are used as ingredients.

The result, we end up with a rough penciled sketch created through a conversation between two artists - a writer and a sketch artist - something like this below.

Step 1 — INCEPTION

Then we move to Inception, where the character’s genetic code is first written. An agent reads the script and produces an initial Character Bible based on story function, dialogue, plot role, emotional trajectory, symbolism, and how the character is meant to land in the mind of the audience. But that first pass is only a draft genome. The writer and the agent then go back and forth, refining the definition until the character becomes specific enough to exert selection pressure later. This matters because evolution only works if there is something stable being selected for. If the Character Bible is vague, the whole system drifts. If it is precise, every later stage has a meaningful standard against which to judge survival.

Step 2 — SEED

Once the Character Bible is strong enough, a Prompt Generator Agent translates that narrative genome into a structured JSON prompt. This, along with using the Sketch that we use as a reference image, is the Seed: the first encoded expression of the character in generative form. In biological terms, if the Bible is the DNA, the seed prompt is the first viable zygote. It carries the core traits forward into a format the image model can express. The quality of the seed matters enormously, because even though later evolution can improve it, the seed establishes the initial population’s range of possibility.

This Seed is three things 1) the zygote for our synthetic evolution 2) how we prove provenance and 3) how we will be able to protect our IP - more on that later as well.

Step 3 — GENESIS

The seed is then injected into Nano Banana to produce the first generation: nine distinct candidate images, which we treat as nine separate species. This stage is called Genesis. At this point the system is not trying to produce a final answer. It is trying to create diversity. Evolution needs variation to work. If all nine species are too similar, the system has little to select among. If they are meaningfully different while still descended from the same Bible, they create a workable population from which higher fitness can emerge.

Step 4 — EVAL

Next comes Eval, where the species encounter their environment for the first time. A swarm of specialized Claude bots, each trained or tuned for a specific evaluative skill, analyzes the nine species across two primary vectors. The first is Character Bible Match, which measures how well the image embodies the writer’s intent. The second is Synthetic Audience Reaction, which estimates how different audience segments are likely to perceive the character. In evolutionary language, this is the first serious test of fitness. The species are no longer just images. They are now organisms exposed to ecological pressure.

To make that “environment” real, we don’t rely on a single judge. We construct it as a panel of specialized expert agents, an agent swarm, and each agent is applying a different kind of pressure that the character must survive. Some of these agents are generalizable for any film, some for any film within the genre of the film we are creating, and some of ephemeral agents created on the fly that custom rigged just for Shepard’s Tone.

Character Bible Match — Expert Agents (Shepard’s Tone) - a few examples

Narrative Function Agent- Determines whether the character clearly embodies their role in the story such as catalyst, witness, or antagonist

Thematic Alignment Agent - Evaluates whether the character reinforces core themes like inevitability, escalation, and unseen systems

Psychological Continuity Agent -Checks that the internal state expressed in the image matches the Character Bible

Backstory Integrity Agent - Assesses whether the audience can infer the character’s lived history such as exile, obsession, and institutional past

Transformation Signal Agent -Looks for evidence that the character has changed over time from before to after

Synthetic Audience Reaction — Expert Agents created to measure whether we are or are not scratching the itch we are trying to scratch.

Prestige Viewer Agent - Evaluates whether the character feels credible and complex for viewers of shows like Chernobyl and Dark

General Audience Agent - Assesses whether the character is immediately understandable without explanation

Memorability Agent - Determines whether the character will be remembered after viewing

Emotional Response Agent - Measures whether the character evokes the intended feeling such as unease, trust, or fascination

Archetype Alignment Agent - Evaluates whether the character aligns with or meaningfully evolves the expectation of a Russian scientist antagonist

Step 5 — SCORE CARD

Those evaluations are then synthesized into the Score Card. This is not a single score but a multidimensional fitness profile for each species. One candidate may score high on Bible alignment but low on audience reaction. Another may be memorable but weak on thematic fit. The score card gives the system a topography of adaptation. It shows not only which organisms are strong, but where they are strong and where they are vulnerable. In natural selection terms, this is the measurement of fitness across multiple environmental dimensions rather than a single survival axis.

Step 6 — ADAPTATION

The next stage is what I would call Adaptation. Here the Casting Agent, working with its swarm, studies the diffs between species and recommends mutations intended to improve fitness. These are not random changes. They are targeted adaptations. A species may need more authority, more sadness, less polish, a stronger sense of having belonged to the system once, or more visual asymmetry to increase memorability. Adaptation is where the system becomes intelligently evolutionary rather than merely generative. It is no longer producing candidates blindly. It is introducing beneficial mutations based on observed selection pressure.

Here are five mutation suggestions that would emerge from the Adaptation phase, each grounded in improving fitness against the Character Bible and audience response:

The forehead reads too high and elongated, subtly signaling Western or Germanic features; compress the cranial proportion and adjust brow structure to align more closely with Eastern European physiognomy

The facial scar feels generic and over-signaled; replace it with a more subtle asymmetry or texture that suggests lived experience without relying on cliché visual shorthand

The jawline lacks structural authority; square the chin and tighten mandibular definition to reinforce leadership presence and ideological conviction

The eyes appear too alert and reactive; introduce slight heaviness in the eyelids and deepen orbital shadows to convey sustained cognitive load and obsession

The grooming is too clean and maintained for a post-exile character; introduce controlled neglect in hair and beard to reflect prolonged focus and detachment from social norms

Step 7 — EVOLUTION

Once the Casting Agent chooses which adaptations to accept, it modifies the JSON for each of the nine species and breeds nine subspecies from each one. This yields 81 candidates total. This stage is Evolution proper: descent with variation. Each original species now gives rise to a family line of related offspring, each expressing slight genetic differences. In the same way that a biological lineage explores adjacent possibilities through mutation and recombination, each species explores nearby aesthetic and narrative possibilities through structured prompt modifications.

Step 8 — SELECTION

The 81 subspecies are then re-evaluated, rescored, and subjected to Selection. Only the top nine survive. The other 72 die out. Their lineages go extinct. This extinction framing is not just metaphorical flourish; it captures the logic of the process. Most variations should not survive. If everything lives, nothing is being selected. Extinction is proof that the search is real. Survival means a species earned its place under current pressure.

Step 9 — RECURSION

Those nine survivors return to Eval, and the loop begins again to Step 4. This recursive cycle of generation, evaluation, adaptation, evolution, and selection can run over and over through the night - generating tens of thousands of variants character species that live, die, thrive, or go extinct .

By morning, the team is no longer looking at raw first guesses. It’s looking at a contact sheet of 9 species variants that have gone from the Cambrian era to the Paleozoic era.

They are looking at organisms that have already survived several hundred if not thousand rounds of environmental pressure. And this is all done overnight.

That compresses a vast amount of trial and error into a single overnight cycle.

Step 10 — HUMAN REVIEW

In the morning, the writer, director, editor, or broader creative team reviews exemplar survivors from each species line. This is where human judgment re-enters as a superordinate evolutionary force. The team may decide to keep or kill entire species lines. Some lineages continue. Others become extinct. And some new species are introduced to replace dead branches. Human review is essential because no synthetic evaluator fully captures taste, intuition, symbolism, or the nonverbal “yes” that a great character can generate.

Additionally, in this review, if the team sees a feature that they like - say a micro-expression in one image, the hairline in another - well those selection are then fed back into the system so that we are not only reviewing monolithic images, but also the components within - these components are then built out as their own JSON files, and injected back into the species - think of this as Selection Bias.

Step 11 — FALSE NEGATIVES AND GENOME REPAIR

One of the most important moments in the process happens when humans love an image that scored poorly. That is not treated as a contradiction. It is treated as a false negative. In evolutionary terms, this suggests the environment is misconfigured. Usually the problem is not the organism; it is the fitness function. Something important is missing from the Character Bible or underweighted in the evaluation swarm. So the Bible is updated, the genome is repaired, and the species is placed back into the population. This is how the system learns. Not just by improving the candidates, but by improving its own definition of what “fit” means.

In our testing, we actually loved the image of the character with long unkempt hair - but it scored low. The reason - we forgot to put in his character bible that he was a part of the Soviet Science structure but had gone rogue.

Step 12 — NEW ERAS

Once the team of artists have made their adjustments to both the genome - e.g. the monolithic and microlithic choice of entire characters or character traits, and have reviewed false negatives for Character Bible wholes and have made adjustment - the entire loop runs again over night.

Every day a new era begins, until we get to APEX Characters.

Step 12 — THE RISE OF APEX CHARACTERS

After enough cycles, usually over the course of days and sometimes weeks, the population of possible Apex Characters ( the candidate species for final character selection ) begins to converge and surface. The surviving species stop feeling like guesses and start feeling like inevitabilities. At that point you can often reach a character whose appearance scores in the mid-90s or higher for likelihood of being the right look.

The result is 9 Character Candidates - and we choose one - for now.

Step 13 — BEHAVIORAL EVOLUTION

We will write extensively about his in the future, but the same machinery can then be applied to everything beyond appearance. How does the character talk? Walk? Pause? What micro-expressions do they emit under fear, grief, shame, authority, seduction, rage, or resignation? How do they respond in moral dilemmas? How do they hold silence? Each of these can be treated as evolvable traits. The character ceases to be a static image and becomes a dynamic organism.

Again, the end result of each episodic evolution is 9 candidates, of which, the team of artists make a selection - again for now - you’ll see in a moment why.

Step 14 — ENSEMBLE CO-EVOLUTION

Then we realized, importantly, characters do not exist alone. They exist in relation to other characters. So the system can evolve chemistry and compatibility as well. One species may score 9.8 in isolation but only 7.0 in pairwise chemistry with a crucial counterpart. Another may score 9.2 alone but 10.0 in ensemble fit.

And what matter more than individual scoring - is chemistry scoring.

In nature, fitness is often relational, not absolute. The same is true here. Characters must be selected not only for individual strength but for how well they coexist inside the dramatic ecosystem.

Just as mis-casting an individual character can torpedo a film, so can poor chemistry between two seemingly well casted characters.

The Outcome

The result is not just “better casting.” - it’s predictability.

It is a scientific-feeling creative workflow in which characters are discovered through iterative pressure rather than declared by fiat. They are born, tested, mutated, bred, culled, rescued, corrected, and reintroduced. Some lineages thrive. Others disappear. The best ones survive because they fit the world, the author, the audience, and the surrounding cast. Over time, the process produces something rare: a character that no longer feels chosen, but evolved.

Some findings that you might find interesting

1. From Scar to Tattoo to Dilated Eye

Early iterations explored a facial scar and then a small tattoo as markers of trauma. Both read as generic. The Synthetic Audience Agents flagged them as familiar tropes that did not differentiate the character. When we introduced the permanently dilated eye, the response changed immediately. Among primary television viewers aged 40 to 60, there was a strong associative signal tied to David Bowie, whose own eye condition was widely remembered as the result of childhood violence. Without exposition, the audience inferred abuse, damage, and psychological depth. The system flagged this as a high-signal feature and it became foundational to the character.

2. Accidental German vs Russian Misclassification

In early passes, the Synthetic Audience Agents consistently classified Alexei as German rather than Russian. This was not obvious to the human eye. The issue traced back to subtle facial proportions, a narrower jaw, a higher forehead, and a more symmetrical structure that aligned with Western European archetypes. When we investigated further, it became clear the writer had originally set the story in Germany, and that bias had unintentionally carried through into the character description. The system forced a correction. We broadened the jawline, adjusted cranial proportions, and introduced slight asymmetries that shifted perception. Subsequent tests aligned strongly with “Russian physicist” across audience segments.

3. Over-Symmetry Reducing Unease

Initial renders trended toward facial symmetry because the model optimized for aesthetic balance. The agents flagged this as a defect. For Alexei, symmetry reduced tension and made him feel too composed, too resolved. His identity required a subtle instability. We reintroduced asymmetry in the eyes, cheek tension, and skin texture, particularly around the damaged eye. This restored a low-grade unease that audiences consistently responded to, even if they could not articulate why.

4. Gaze Reading as Passive Instead of Inevitable

In several iterations, Alexei’s gaze read as observant but passive. The Psychology and Audience Agents flagged this as a critical failure. The character is not reacting to events, he understands them before they unfold. His gaze needed to feel anticipatory, almost pre-resolved. We adjusted eye focus, reduced micro-movements, and altered lid tension. The change was subtle, but the audience perception shifted from “watching” to “knowing,” which aligned with his role as a symbol of inevitability.

5. Accidental Softening of Threat Profile

At one point, small changes to the brow and mouth introduced a trace of warmth. Individually, these changes seemed harmless. But the agents flagged a drift in aggregate perception. Among younger viewers, Alexei began to read as contemplative rather than dangerous. This conflicted with his narrative role. We tightened the brow, reduced upward tension, and introduced slight compression in the lips. The result restored ambiguity without losing the possibility of redemption, which was the balance we were aiming for.

Open Source and Free

We decided to open source the Synthetic Swarm Bot platform because we kept coming back to a simple belief: creative tools should not be gated. The ability to make something meaningful should not depend on access, budget, or permission.

So we’re putting this in the open. It will be free and open source in the truest sense. You can download it, modify it, run it yourself, and shape it however you want. At the same time, for those who don’t want to manage infrastructure, we will offer a hosted version as a service.

Of course if you don’t want to host it yourself - we are considering offering a hosted version - but we’ll have to see on that one - if we are footing the hosting and service costs, we’ll have to charge something on that one.

If you care about a specific kind of storytelling, you’ll be able to build agents that reflect that. Agents for murder mysteries. Agents for children’s shows. Agents for documentaries, thrillers, or forms that don’t exist yet. Those agents won’t live in isolation. They will plug into the same system, interact with others, and shape how characters and stories evolve.

This only gets better if more people bring their perspective into it. If this feels interesting to you, share it. The system improves as the range of minds inside it expands.

If you want to follow along and be notified when we release it - just follow this Substack.

Always Educating

We’re also going to show our work.

This won’t live as a black box. We’ll publish a series of talks and case studies where we walk through real projects step by step. Shepard’s Tone is just the beginning. You’ll see where the system works, where it breaks, and how decisions actually evolve under pressure.

The goal is not to present finished answers. It’s to make the process usable. Something you can take, adapt, and build on in your own work.

Because this is bigger than casting. It’s a different way of making things.

How do join

JUMP//CUT is our attempt to build the first open, professional-grade platform for film and television in the AI era. If you want to be part of it, there are two ways in.

You can join the core open source foundation ( which we are setting up now )

You can contribute directly by building agents and systems that reflect what you care about. ( entire genres, workflows, and creative perspectives can be added to the network )

We’re just starting to see what this becomes. If you want to help shape it, come build with us. Just take the plunge and email me steve.e.newcomb@gmail.com

In kindness, hope, and joy,

Steve